The AD/ADAS Pack is made for engineers in charge of advanced driver-assistance systems testing and validation and/or researchers who want to study human factors behavior when using ADAS.

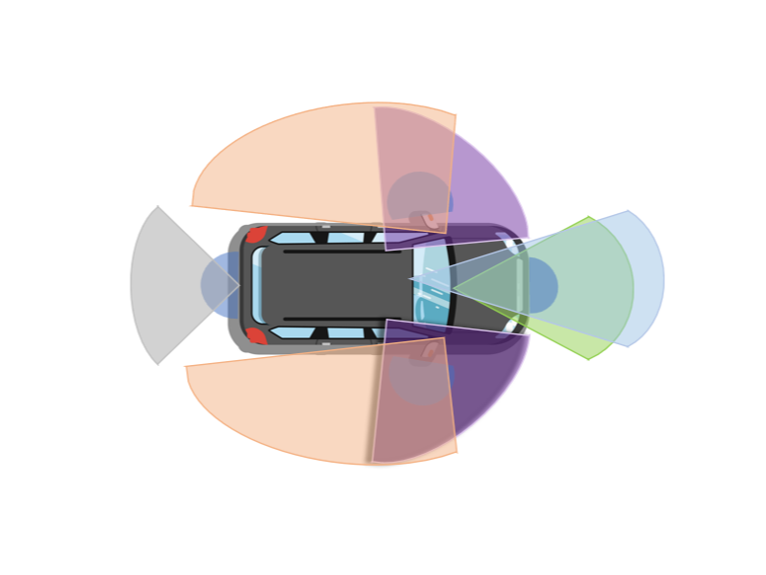

Ex: LiDAR; Radar; Ultra-sonic; Lighting; E-horizon; Camera

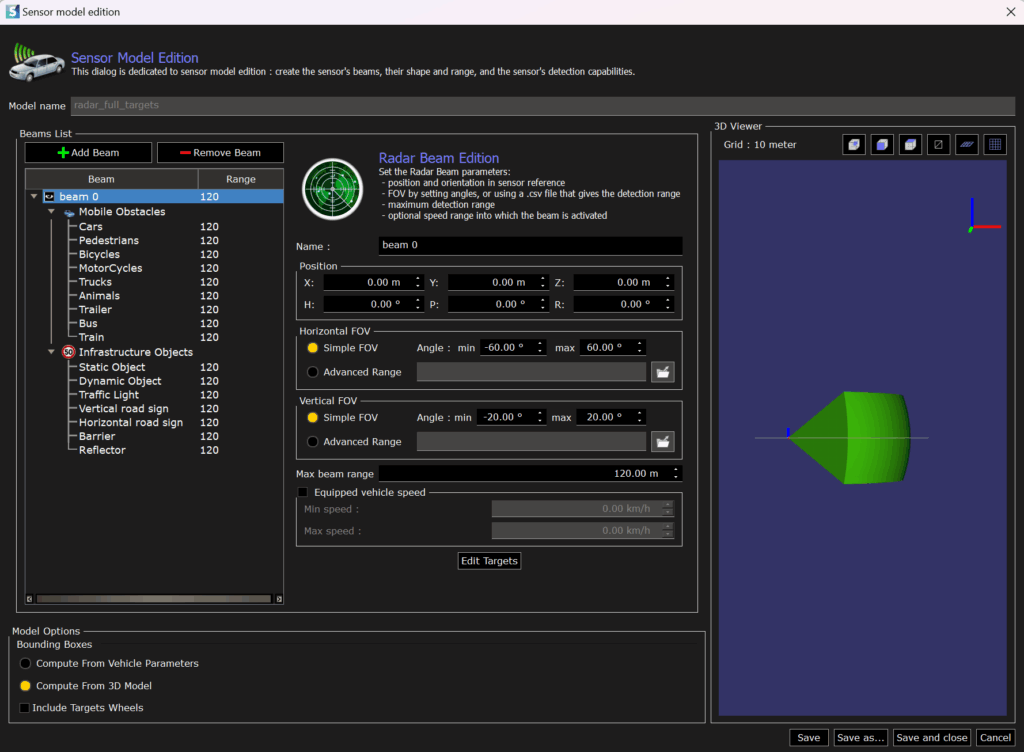

The default data set of SCANeR studio comes with sensors’ samples for camera, GPS, lidar, radar and ultrasonic.

These models can also be fully customized using python scripting: anchor point selection, custom noise models, detection errors, etc.

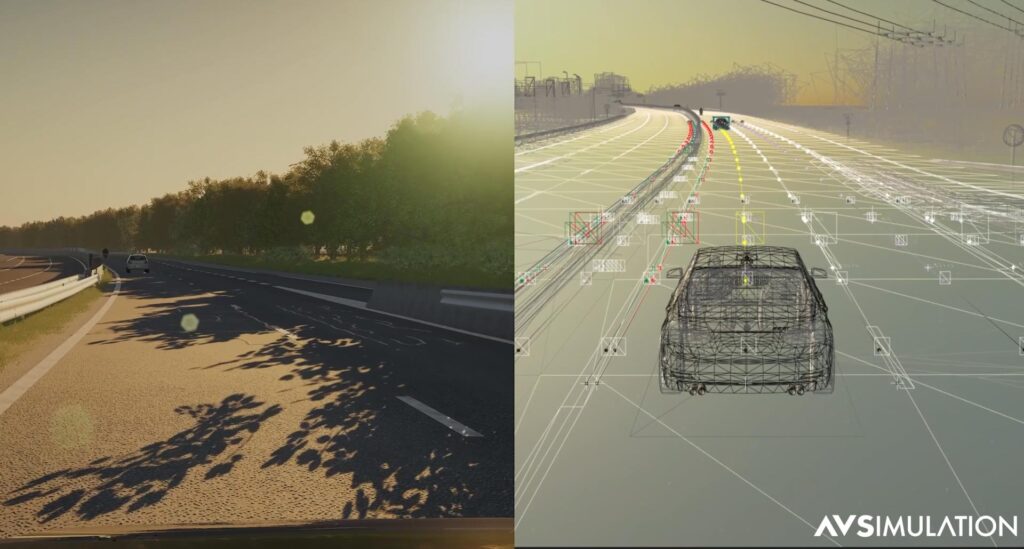

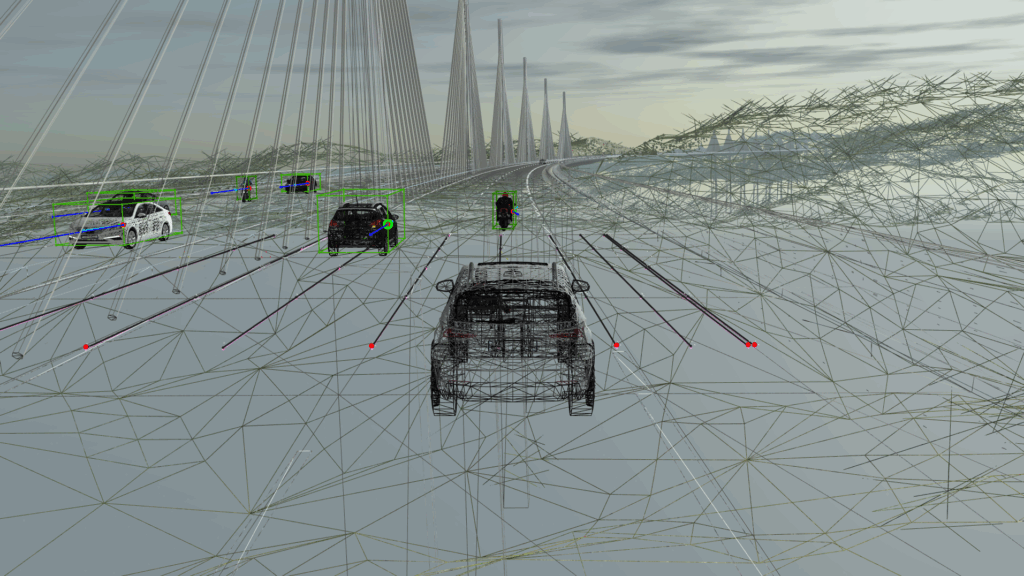

Sensor Viewer

User-Friendly Interface

Engineers working on ADAS such as AEB, ACC, LKA or many others, are able to test and validate their systems in an unlimited amount of known and unknown situations created in SCANeR (e.g. SOTIF). Through its ability to model a large number of sensors, it enables the validation of any driving automation level (1 to 5).

Ask for a free trial now